This animation does an amazing job of explaining why a slightly misplaced wire label led to a shipwide blackout, which in turn led to a the Francis Scott Key Bridge collapsing. Dymo owners take note!

The mural in the Refectory at the University of Sydney tells you a lot about how that institution saw its place in the world in the 1970s (and perhaps still does)

Reading is falling off a cliff, like an endangered craft. This is clearly more about performance than interiority, but perhaps that’s okay if it leads to someone reading?

A lot of groups get unfairly blamed for the world’s ills but few have the courage to name the real problem: aphids and scale.

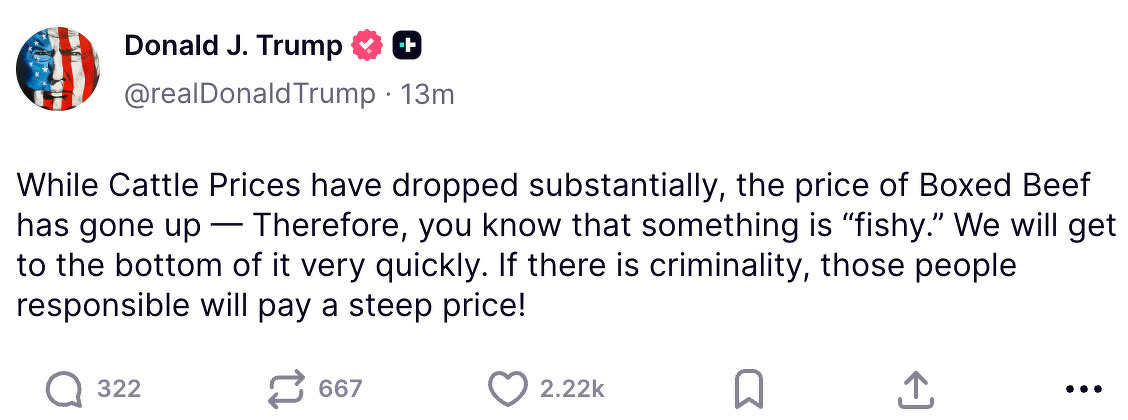

This ridiculous post (the answer is clearly his own tariffs) made me learn about “Boxed Beef”, a fascinating turn in intensive beef production that reminds me of what has happened to poultry. Sci-fi authors who thought we’d be eating vat protein got it wrong.

I grew up in a town where there were several Aboriginal deaths in custody in the ‘80s. The final report of the RCIADIC was released 34 years ago.

Nothing’s changed.

Western Sydney University students and alumni emailed to say their degrees had been “revoked”. These incidents are damaging because they mirror the officious and depersonalised tone of so much comms. If acting in more human and humane ways was the norm, these incidents wouldn’t be so harmful.